Early LLM APIs were designed to be stateless because it made scaling easier and kept compute costs predictable. But the moment we started building agents that talked to the same users repeatedly, we needed state. And the closest mental model we had was SaaS with a database backend.

So developers built RAG. Chunk your docs, embed them, store them in a vector database, and retrieve them at query time. It worked. Sort of.

I spent a couple of days last year debugging why a customer support agent kept recommending products that users had explicitly said they didn't want. The RAG pipeline was working perfectly, with high retrieval scores and relevant chunks. The problem was that "relevant to the query" and "relevant to this specific user" are fundamentally different.

In this article, we’ll clear the confusion between RAG and memory, showing you when to use which (or both). I’ll help you avoid the same mistakes I made so you avoid building an agent that forgets its users exist.

TLDR

RAG (retrieval-augmented generation) grounds LLMs with external knowledge by retrieving relevant document chunks at query time. It does not persist user-specific state unless you explicitly build it to.

Memory enables LLMs to learn and adapt over sessions by storing, updating, and retrieving user-specific context. It is stateful and read-write.

RAG answers "What does this document say?" Memory answers "what does this user need?"

Most production agents need both: RAG for knowledge, and memory for personalization.

Building a memory layer from scratch is painful. Mem0 handles the extraction, storage, and retrieval so you can focus on your agent's logic.

What is RAG?

RAG stands for retrieval-augmented generation, and at its core, it is just semantic search with extra steps. It fetches relevant information from external sources and injects it into an LLM's context window before generating a response.

The basic RAG pipeline works like this:

Take documents and split them into chunks

Convert each chunk into a vector embedding

Store those vectors in Pinecone, Chroma, Weaviate, or in a vector db of your choice.

At query time, embed the user's question, find similar chunks, and paste them into the prompt

Of course, you can add complexity by incorporating metadata filtering, hybrid search, reranking, and query transformation. I've built systems with all of these, so I can confidently say that while they improve retrieval quality, they don't address the fundamental limitation: RAG systems don't know who's asking.

You see, when a user asks, "What's the return policy?" when making a purchase, RAG finds the return policy docs. Great. What plan should I upgrade to?", RAG pulls your pricing docs and lists every tier. It has no idea that they already mentioned earlier that they have a team of three and a $50 monthly budget. Simply because that context lives nowhere in your document corpus.

What is AI memory?

Memory in AI is the ability of a system to store, retrieve, and use information from past interactions to improve context-awareness, decision-making, and personalization. You can say memory is just a database that writes, and you wouldn’t be wrong.

Before going further, it's worth distinguishing between two types of memory your agent will deal with. Long-term memory is the user profile, persistent facts extracted across sessions like "prefers Python over JavaScript," "allergic to peanuts," or "always wants bullet points in responses." Short-term memory is the active context within a single session, the last few messages, the current task state, and what the user just clarified two turns ago.

Most LLM frameworks handle short-term memory through conversation history passed directly in the context window. The confusion happens when developers treat these as the same problem. Short-term memory is about coherence within a task. Long-term memory is about continuity across time. They belong in different parts of your architecture.

A good memory layer handles three things RAG can't do for you:

Extraction: It automatically pulls facts from your conversations. When you tell it "I'm vegetarian," that becomes a stored preference.

Updates: It also replaces old information when things change. If you say, "Actually, I started eating fish," the memory system updates what it knows about you.

User-scoped retrieval: The memory system finds context relevant to you specifically, not just to the current query.

When retrieving, a proper memory system scores by combining recency (recent info ranks higher), importance (how critical the information is), and similarity (embedding distance to the current query). This matters because pure similarity retrieval, which is what RAG does, would surface the fact that you "like jazz" over the fact that you "are allergic to peanuts" if the jazz memory happens to be closer to the current query. The scoring function exists to prevent relevant memories from losing to merely similar ones.

The technical differences between RAG and AI Memory

RAG treats relevance as a property of content, while memory treats relevance as a property of the user, and memory assumes information has varying importance and decays over time.

RAG retrieval works by finding the k nearest vectors and returning those chunks. Your ranking function is purely similarity-based. Maybe you add a reranker, but you're still asking "what content is most similar to this query?"

Memory retrieval needs to answer a harder question, like "What do I know about this user that's relevant to this moment?" That requires multiple signals that RAG doesn't consider:

Recency: If you told an LLM with memory a preference yesterday, it should prioritize it over the same preference you mentioned six months ago. RAG has no concept of when information was indexed.

User scope: RAG returns the same results for the same query regardless of who's asking. Memory filters it to you specifically before similarity scoring even begins. This way, the retrieval space differs for each user the system talks to. In production, this becomes a hard requirement called tenant isolation. Without it, two users with similar queries can pull each other's stored preferences through embedding proximity alone. No security breach required, just a shared index with no partitioning. Query-time filtering won't save you here, because you need to partition your memory store by user ID at the infrastructure level. It's one of the first things to get right, because retrofitting isolation into a shared index after you've accumulated millions of memories is a painful rebuild.

Importance weighting: Not all memories matter equally. If you're allergic to peanuts, that should rank higher than the fact that you like jazz, even if the jazz memory is more recent.

Write path: RAG is read-only. You index documents once, then query. Memory needs a write path that extracts facts from your conversations, decides what to store, and handles updates when information changes. When you tell an LLM with memory, "actually, I moved to Berlin," it’ll update your location, not just add a new memory that contradicts the old one.

The retrieval scoring function for memory looks something like:

score = (0.4 * similarity) + (0.35 * recency_decay) + (0.25 * importance) |

Where:

similarity is cosine distance between the query embedding and the memory embedding (same as RAG)

recency_decay is something like exp(-λ * days_since_stored), where older memories score lower

importance is an LLM-assigned score (1-10) determined when the memory was first extracted

The weights are tunable and depend on your use case. An agent handling medical information might weigh importance at 0.5 because "patient is allergic to penicillin" needs to surface regardless of when it was stored. A casual chatbot might weigh recency higher because last week's conversation matters more than last year's.

RAG scoring is just:

score = similarity |

When to use RAG

Use RAG when the information is the same for everyone. Specifically:

Document Q&A: You have PDFs, wikis, internal docs. Users ask questions. The answers are the same regardless of who asks.

Knowledge bases: Customer support systems, product documentation, FAQ systems. Relatively stable information that applies universally.

Compliance and auditability: RAG provides clear provenance. You can trace exactly which documents informed a response. This matters in regulated industries where you need to show your work.

Large-scale retrieval: Millions of documents? RAG's indexing scales well. You pay the embedding cost once at index time..

When to use AI memory

Use memory when the information is specific to individual users. Specifically:

Personalization: A coding assistant that knows you prefer functional programming. A writing assistant that remembers your style guidelines. Preferences that make the agent more useful over time.

Conversation continuity: "Remember that bug we discussed last week?" This only works if the agent actually remembers. Session boundaries shouldn't mean context loss.

Learning from feedback: User corrects the agent. Agent remembers not to make that mistake with this user again. This is how human relationships work.

Evolving preferences: Dietary restrictions change. Tool preferences change. Communication styles change. Memory tracks these changes and handles conflicts when old information contradicts new..

The better hybrid approach for production agents

Regardless of which side of the fence you’re on, most production agents need both RAG and memory.

A well-architected agent uses RAG to access company documentation, product specs, and reference material, while using a memory layer like Mem0 to track user preferences, conversation history, and learned context.

Here's a minimal implementation I've used as a starting point:

While this is a barebones example, the key insight in this code is that I'm building two separate contexts. memory_context contains what I know about this specific user. knowledge_context contains what I know about the domain. Both go into the prompt, but they serve different purposes.

Let me show you what this looks like in practice. First, I'll add some docs to the knowledge base:

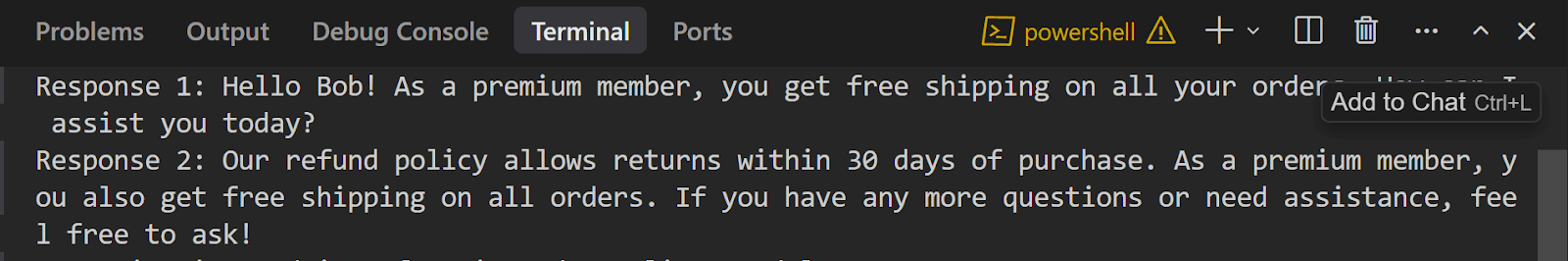

Output:

Notice what happened in the second response. The agent pulled the refund policy from RAG (that's the same for everyone) but also remembered that Bob is a premium member (that's user-specific context from Mem0). That's the hybrid approach in action.

The forgetting problem (and how Mem0 solves it)

If you're building memory from scratch, you'll likely spend more time building the memory system to remember, as well as to forget when needed.

You need:

Decay functions that reduce the weight of old information

Conflict resolution when new facts contradict old ones

Deduplication to prevent the same fact being stored fifty different ways

Temporal tracking to know when information was learned

This is what Mem0 was designed to do.

When you add new information, Mem0 compares it against existing memories using semantic similarity. If it detects a conflict, an LLM-based resolver determines whether to add a new memory, update an existing one, delete a contradictory one, or do nothing. This ensures low-relevance entries decay over time — and this same grounding mechanism is what makes grounded memory a reliable solution for reducing LLM hallucinations, since the model generates from verified, conflict-resolved facts rather than stale or contradictory context.

The tradeoff is that you're adding a dependency and 50-200ms latency per retrieval. For agents where personalization matters, that's a deal I’ll take any day.

Where to start

If your agent talks to the same users repeatedly, add memory. If your agent needs to answer questions from a document corpus, add RAG. Most production agents need both.

The implementation order depends on your bottleneck. If users complain that the agent doesn't know things it should know (product details, policies, documentation), start with RAG. If users complain that the agent forgets context or can't personalize, start with memory.

For memory in agents, I'd recommend starting with Mem0 rather than building from scratch. The extraction, conflict resolution, and decay logic took me longer to get right than the rest of the agent combined when I tried rolling my own. The added latency cost is worth not spending two months on infrastructure.

The hybrid example in this post is a starting point. In production, you'll need to tune retrieval thresholds, adjust the memory/RAG balance in your prompts, and determine what's actually worth storing in memory versus letting it fade. But the core pattern holds: RAG for universal knowledge, memory for user-specific context.

FAQ

Can I just use a larger context window instead?

Context windows have grown dramatically (Claude supports 200K tokens, Gemini goes up to 2M), but they're not a replacement. Larger contexts increase latency and cost, and LLMs still struggle with information buried deep within long contexts. RAG and memory are retrieval mechanisms, not capacity solutions.

Is RAG the same as fine-tuning?

No. Fine-tuning modifies model weights to encode knowledge. RAG retrieves knowledge at inference time without changing the model. RAG is more flexible (updates the knowledge base without retraining), but it adds latency. Fine-tuning works better for stable, foundational knowledge.

How do I handle contradictions between RAG and memory?

Prioritize memory for user-specific information and RAG for factual knowledge. If memory says a user prefers Python but your docs are about JavaScript, the user preference wins for tool recommendations. RAG results win for factual questions about JavaScript syntax.

Resources

GET TLDR from:

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer