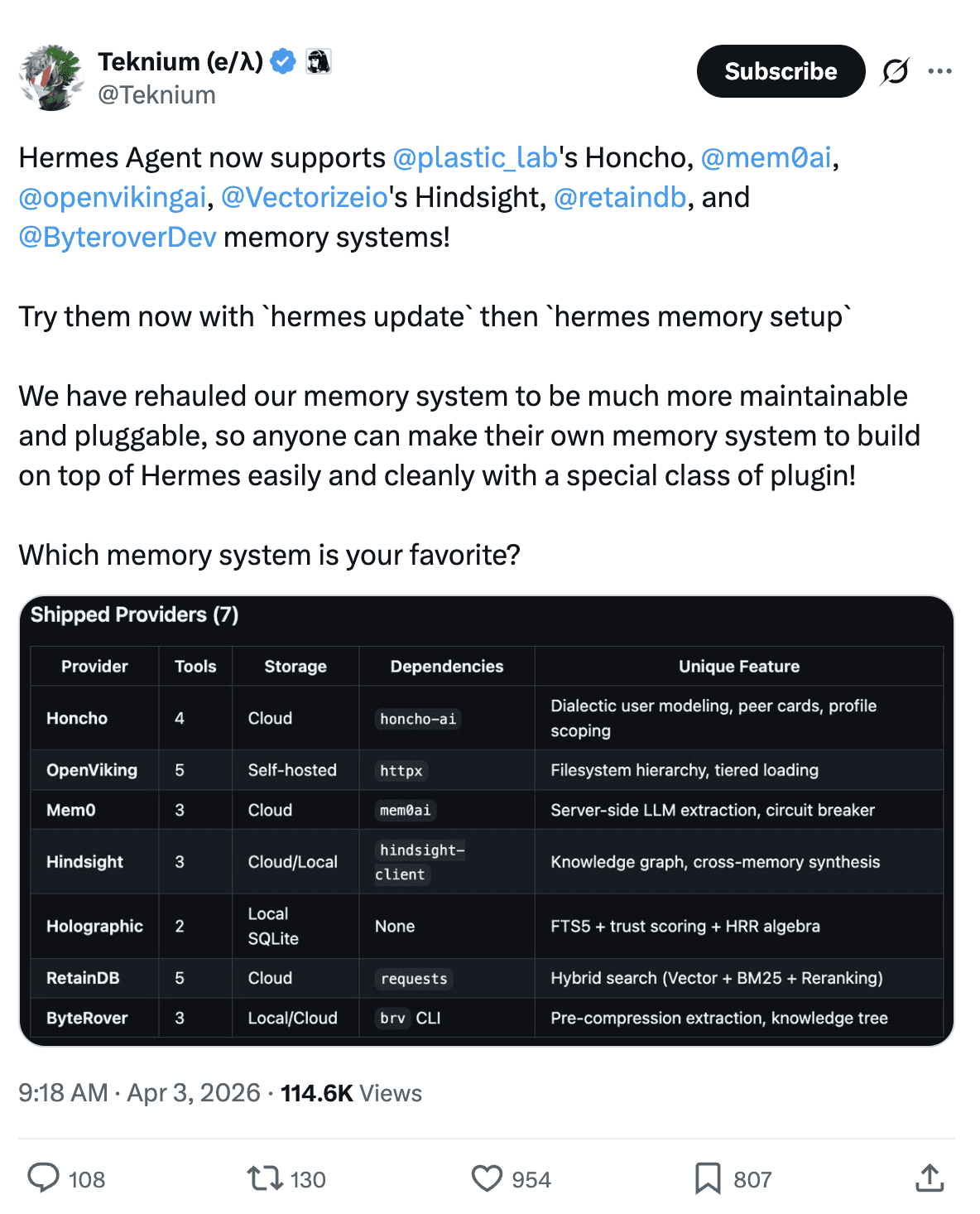

Hermes Agent just added 6 memory providers. Mem0 is one of them. Setup takes one command. Circuit breaker if anything fails. The memory system handle storage, retrieval, and context across sessions. So your agent actually remembers. Here's how it works.

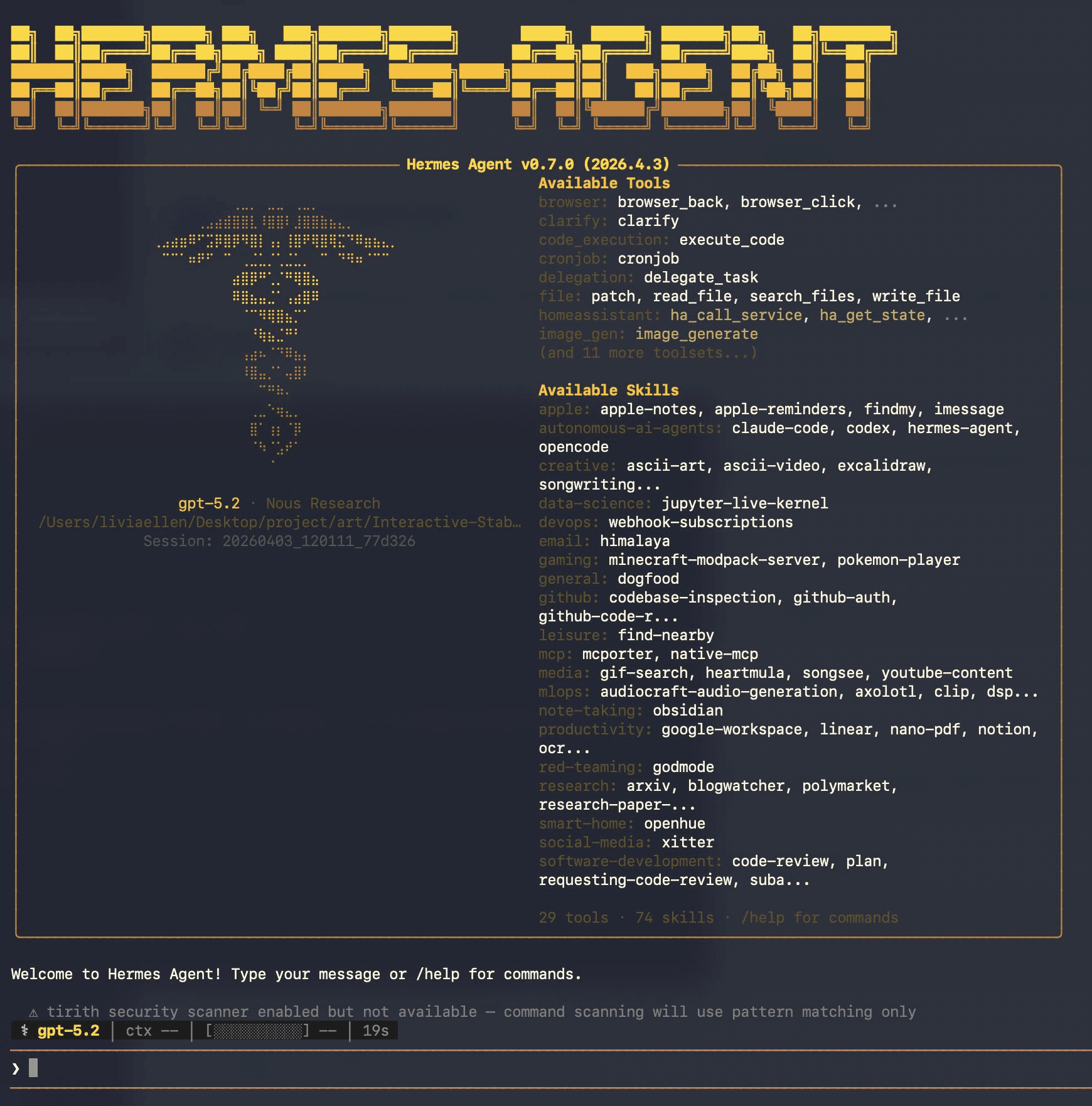

What Is Hermes Agent?

Hermes is a self-improving AI agent CLI by @NousResearch. Built for long-horizon tasks that can take minutes to hours.

Hermes Agent (source: screeshot form personal usage)

It already had a local memory system (MEMORY.md and USER.md files). Now it added a pluggable external provider slot. Six providers supported. Mem0 is one of them.

How Memory Works?

Hermes runs memory at 3 points in every conversation turn:

Hermes Agent Memory Flow

Before you respond: Cached Mem0 results from the previous turn are injected into the system prompt. Zero latency, no API call.

After the agent responds: Hermes sends the (user message, reply) pair to Mem0 in a background thread. Mem0 auto-extracts facts. You never tell it what to remember.

Between turns: Hermes pre-fetches relevant memories in the background so they're ready before your next message.

How Memory Appears in the System Prompt

At the start of every session, memory entries are loaded from disk and rendered into the system prompt as a frozen block:

The format includes:

A header showing which store (MEMORY or USER PROFILE)

Usage percentage and character counts so the agent knows capacity

Individual entries separated by § (section sign) delimiters

Entries can be multiline

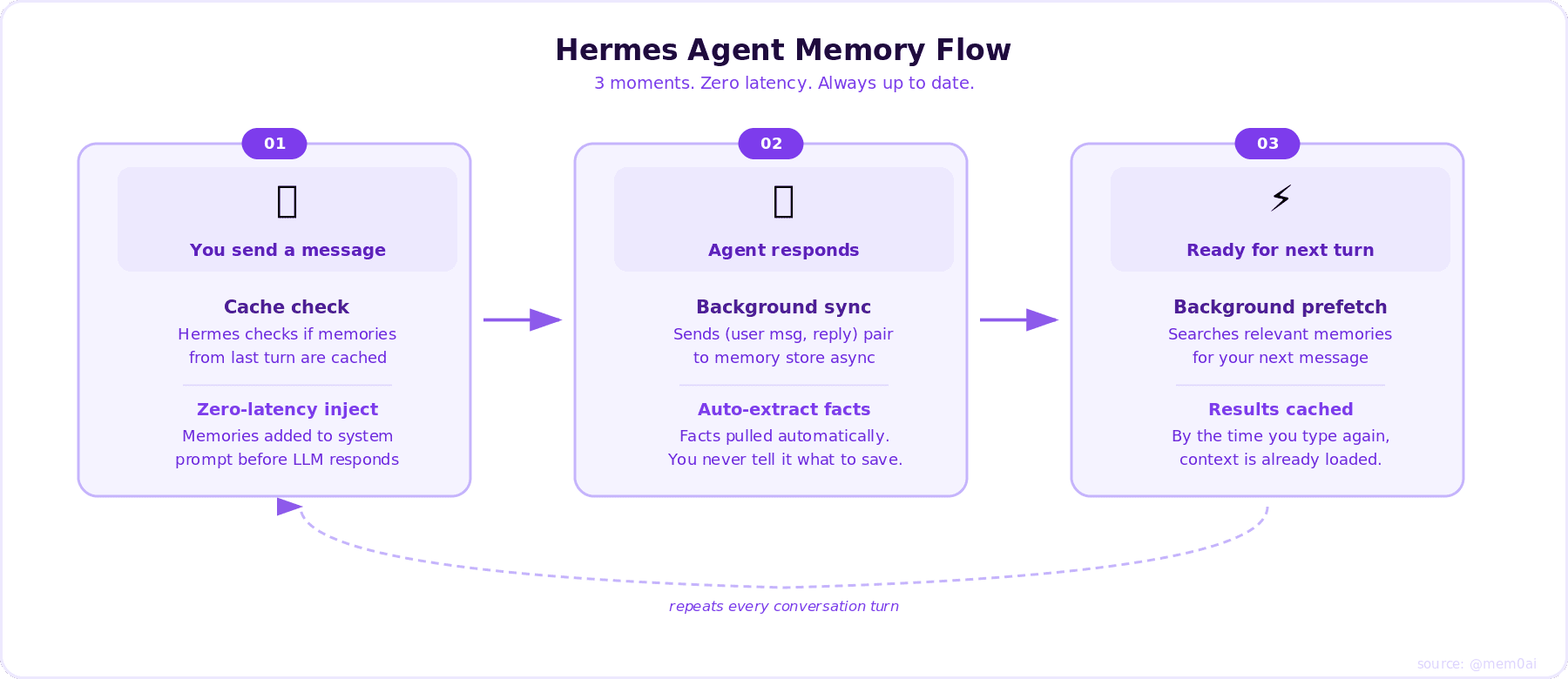

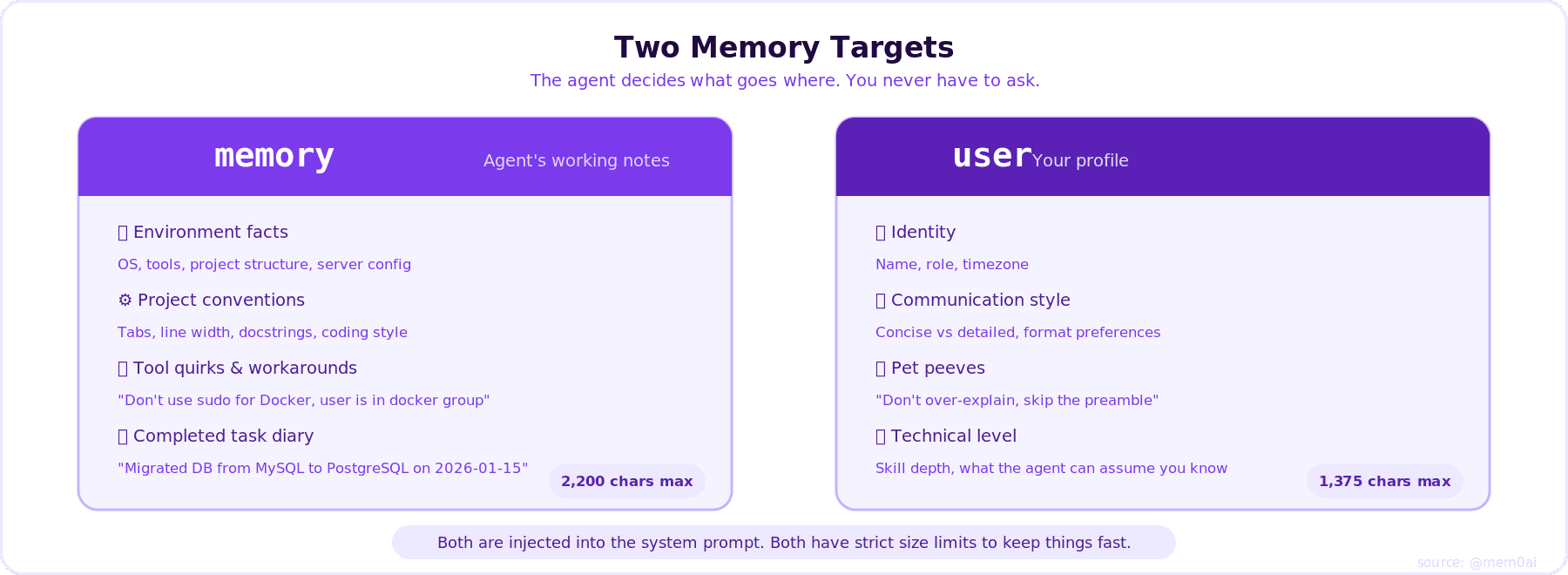

Memory Targets

Hermes writes memories to two targets:

memory stores what the agent needs to operate well: environment facts, project conventions, tool quirks, completed work entries, and techniques that worked. Think of it as the agent's working notes about the environment it lives in.

user stores who you are: name, role, timezone, communication preferences, pet peeves, workflow habits, and technical skill level. The agent uses this to adapt how it talks to you and what it assumes you know.

Memory Capacity in Hermes Agent

The agent saves to both automatically. You never have to ask. It decides based on what's durable and reusable.

Memory Targets

Small enough to stay fast, large enough to matter.

Memory Tool Actions

The agent uses the memory tool with these actions:

add - Add a new memory entry

replace - Replace an existing entry with updated content (uses substring matching via old_text)

remove - Remove an entry that's no longer relevant (uses substring matching via old_text)

There is no read action, memory content is automatically injected into the system prompt at session start. The agent sees its memories as part of its conversation context.

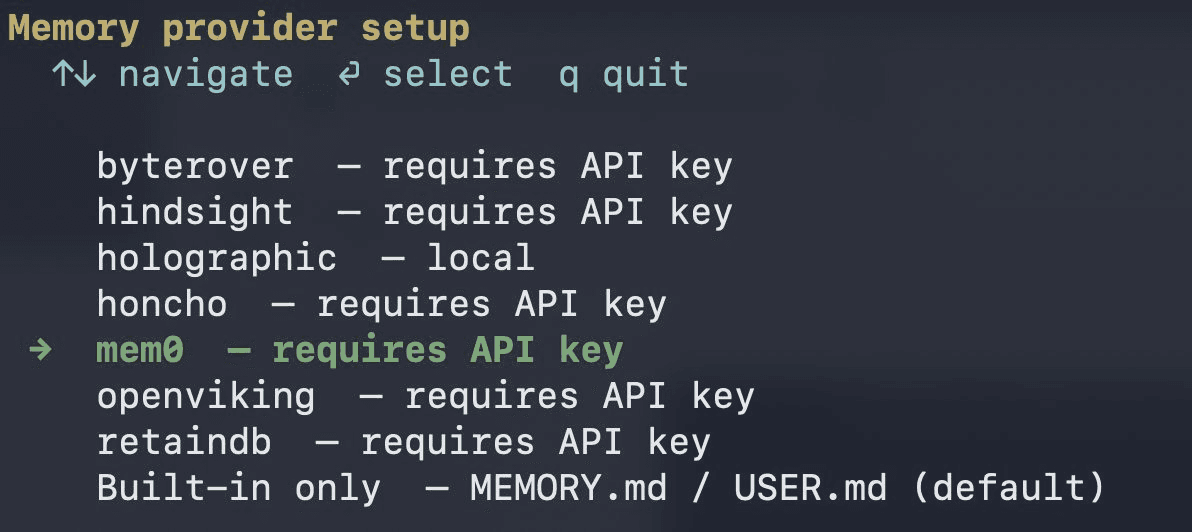

Setup

Run this in terminal

2. Select mem0

Hermes Memory Provider

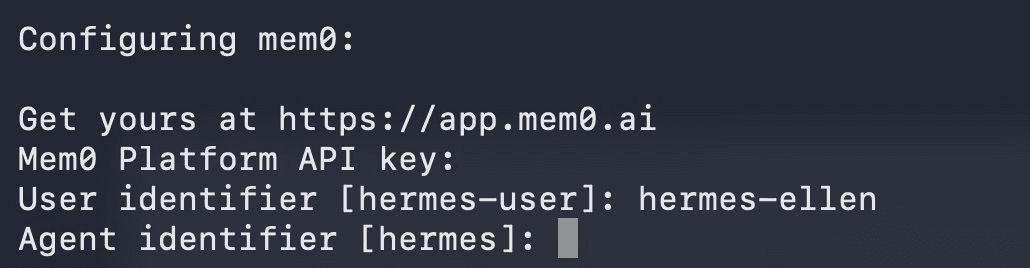

3. paste your API key and your configuration

You can get your API key at app.mem0.ai

Configuring mem0

4. Enabling reranking for recall

Reranking for recall

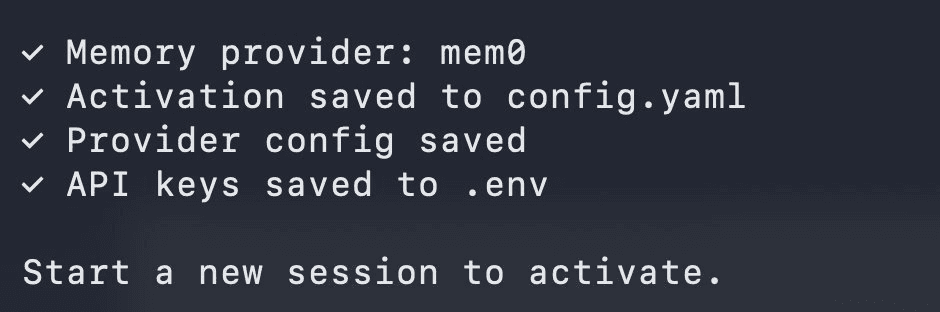

You are done. Memory is configured

Success Message

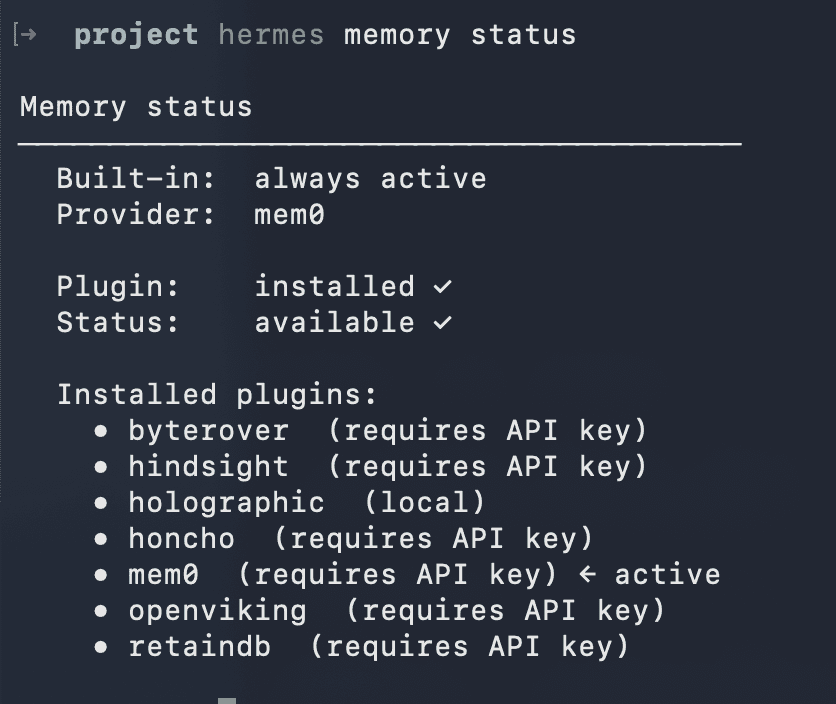

You can check your memory configuration by running

Memory Status

3 Tools the LLM Gets

When Mem0 is active, the agent gains three tools it can call automatically:

mem0_profile, fetch all stored memories about the user.

mem0_search, semantic search with optional reranking and top_k filtering.

mem0_conclude, store a fact verbatim, no server-side extraction.

The LLM calls these on its own. No prompting needed.

Reliability

Circuit breaker: 5 consecutive failures pause Mem0 for 2 minutes, then retry. Agent keeps working throughout.

Non-blocking: All API calls run in background threads. Nothing slows your conversation.

Why It Works

Most memory systems search at query time, adding latency on every turn. Hermes flips this: search happens between turns, results are cached before you type. Mem0 handles extraction server-side so Hermes never has to decide what's worth remembering.

One command. Persistent memory. No latency cost.

References

In Context #5

This blog is part of In Context, a mem0 blog series covering AI Agent memory and context engineering.

mem0 is an intelligent, open-source memory layer designed for LLMs and AI agents to provide long-term, personalized, and context-aware interactions across sessions.

Get your free API Key here : app.mem0.ai

or self-host mem0 from our open source github repository

GET TLDR from:

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer