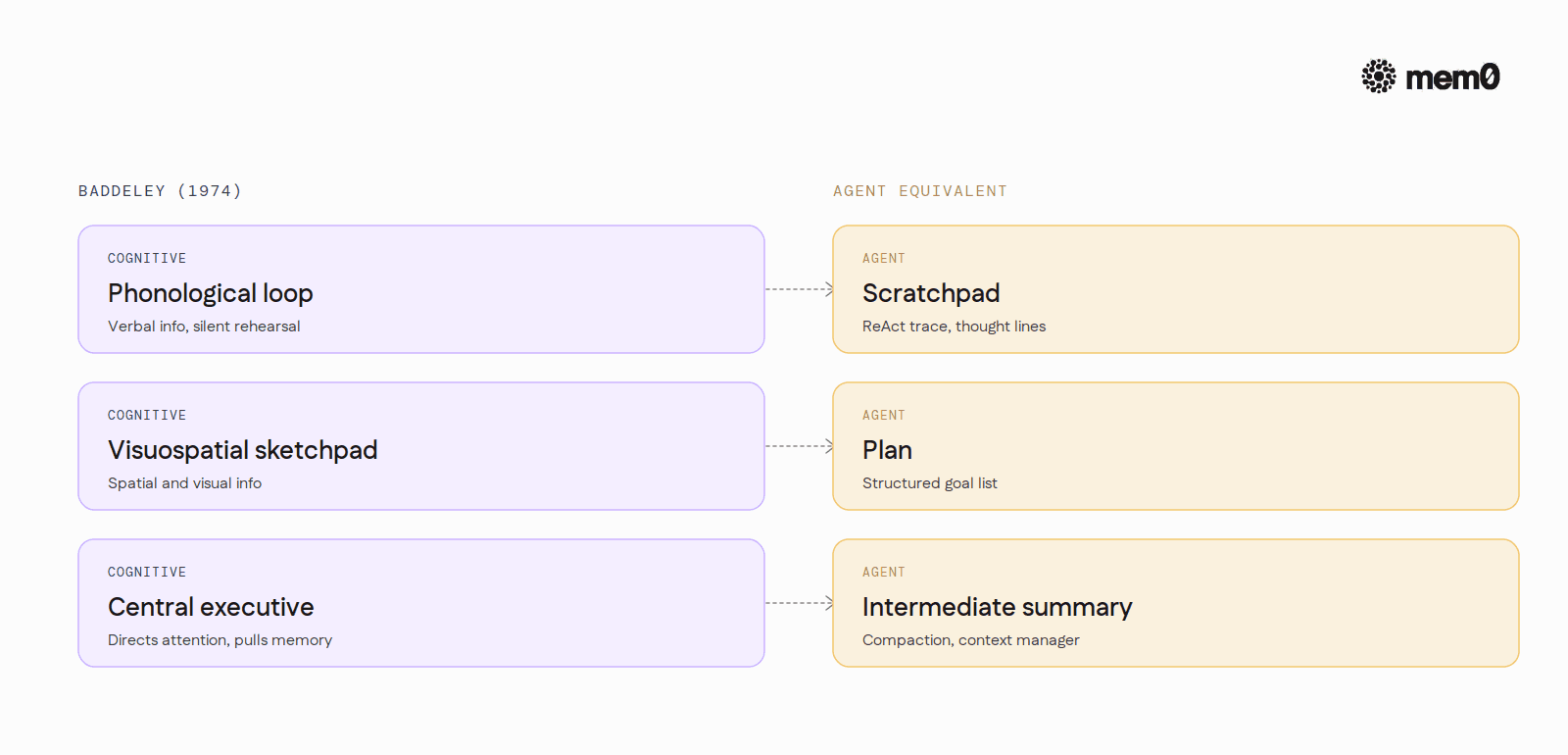

Working memory is a term borrowed from cognitive psychology.

Alan Baddeley introduced the modern model in 1974 to describe the small, active workspace the brain uses to hold information while doing something with it: solving a math problem, following a sentence, deciding what to say next. Three properties define it. Limited capacity. Short duration. Active use.

The "active use" piece is what separates it from short-term memory in the strict sense.

Short-term memory is the passive holding of information for a few seconds. Working memory is short-term memory plus operations: rehearsal, comparison, transformation, retrieval cues into long-term store. In Baddeley's model the workspace has three parts.

The phonological loop holds verbal information by silently rehearsing it. The visuospatial sketchpad holds spatial and visual information. The central executive directs attention between them and decides what gets pulled in from long-term memory. None of these are stores. They are the workspace where stored information gets used.

The CoALA paper (Sumers et al., 2023) maps the same idea onto language agents. CoALA defines working memory as "the active state holding goals, observations, and recently retrieved knowledge that the agent is currently reasoning over." The wording is careful. Working memory is not the prompt. It is the subset of the prompt the agent is actively reasoning with, plus whatever scratchpad the agent uses to externalize that reasoning so the next step can build on it.

The distinction lands hardest in agents because their context buffer can be enormous. Treating the whole buffer as working memory is the failure mode. Treating only the actively-attended portion as working memory is the design.

Why context window is not the same thing

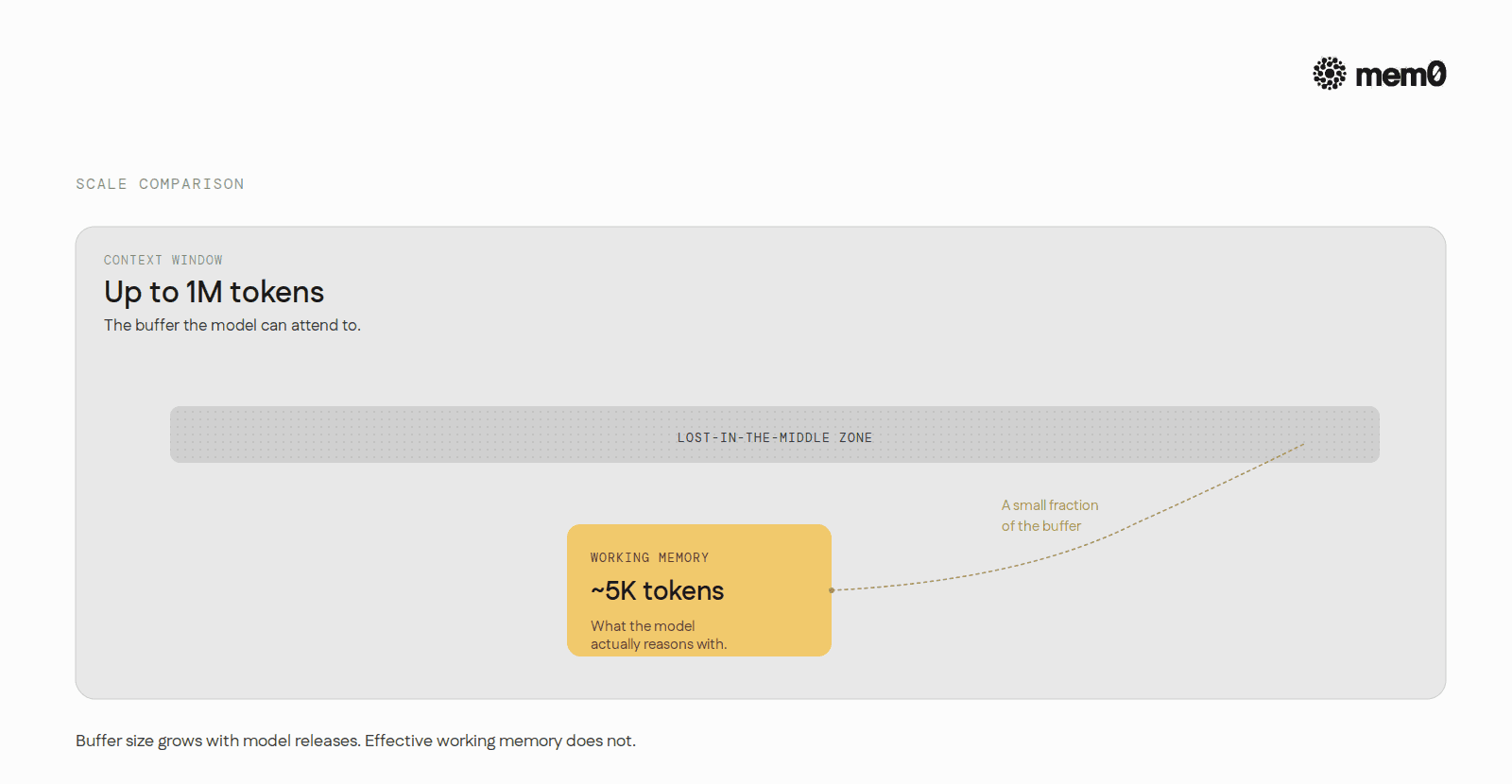

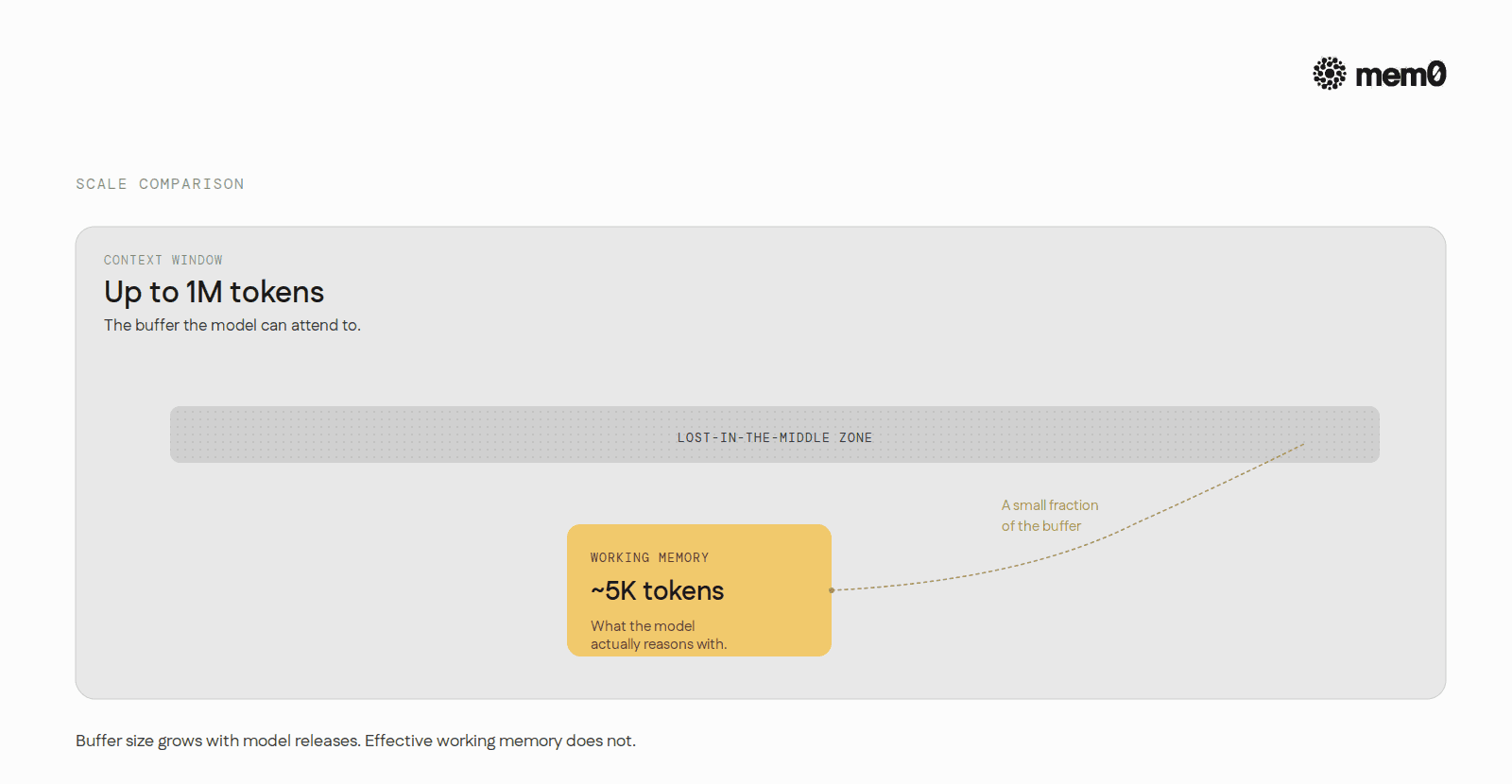

LLM agents have a context window, which is a buffer the model can attend to. The buffer can be large. Anthropic's Claude Opus 4.7 supports up to a 1M token window. Gemini 2.5 Pro is in the same range. None of those tokens are working memory until the model uses them.

Three observations make the distinction concrete.

A model can be passed a 200-page document and still answer the user's question by attending to the first 500 tokens of the system prompt. The 200 pages are in the context. They are not in working memory in any useful sense, because the model is not reasoning with them.

Long-context models exhibit a "lost in the middle" effect (Liu et al., 2023). Retrieval accuracy drops sharply for facts placed in the middle of a long input even when the model technically has access to them. The information is in the buffer. The working memory mechanism (attention) is failing to surface it.

Agents that succeed on long-horizon tasks externalize working memory. They write scratchpads, generate plans, and emit intermediate summaries. They do this because the model's internal working memory is brittle past a few thousand active tokens, regardless of how big the buffer technically is.

The practical conclusion. An agent's effective working memory is much smaller than its context window. Closer to the few thousand tokens the model can reliably reason with at a time. Pretending otherwise is what produces the agent that confidently ignores a constraint stated 50K tokens earlier.

How agents implement working memory today

Three patterns recur in production agents.

The scratchpad

The simplest working memory in agents is a scratchpad. A section of the prompt the agent writes to during a task and reads from on subsequent steps. ReAct (Yao et al., 2022) is the canonical version. The agent emits a Thought: line, an Action: line, an Observation: line, and repeats. Each thought is the agent's working memory of what it knows so far about the task. The scratchpad lives inside the prompt, gets longer with every step, and gets pruned (or summarized) when buffer pressure rises.

Almost every modern agent harness uses a scratchpad of some shape.

Cursor's agent mode emits internal reasoning before each tool call.

Claude Code keeps a TODO list in the system prompt.

AutoGen agents pass a structured

messageslist between tool calls that doubles as a scratchpad.

The shapes vary. The function is the same: an externalized working memory the model can read across steps because its internal working memory cannot reliably carry the state alone.

The plan

A plan is a structured scratchpad. The agent generates a list of steps up front and updates it as it works. The plan section sits at a fixed location in the prompt, easier for the model to attend to than a free-form thought trace. Tools like Cursor's Composer mode and Claude Code's TODO tool use this shape. The plan is working memory for the task, not for facts about the user. Its job is to keep the model anchored to a goal across a sequence of tool calls that might otherwise drift.

The plan also serves a second role. It compresses. A 12-step task laid out as a list takes a fraction of the tokens it would take to re-derive the next step from the full reasoning trace. Working memory is capacity-constrained, so compression matters.

The intermediate summary

Long-running agents periodically summarize their own context. Claude Code calls this "compaction." OpenAI's o-series CLIs do the same under a different name. AutoGen exposes it as a configurable strategy. The mechanism is consistent. When the working buffer fills past a threshold, an inner LLM call collapses turns 1 through N into a paragraph or two. The summary frees buffer space and becomes the new working memory base for turns N+1 onward.

Summarization is lossy by design. What survives the compaction is what the model judged worth keeping. The user has limited visibility into what was dropped, which is why agents that lean too hard on compaction sometimes "forget" a constraint stated 30 turns ago. The constraint did not vanish. It got summarized away.

These three patterns are the operational definition of working memory in current agent stacks. None of them touch durable storage. None of them survive a process restart. They are the agent's active workspace. Like Baddeley's central executive, they decide what gets pulled in and what gets pushed out, turn by turn.

A walk through working memory in a real agent step

Consider an agent debugging a failing test. The user says, "the auth test broke after my last commit, figure out why."

At step zero the agent's working memory holds the user's request and the system prompt instruction to investigate before acting. The model's central-executive equivalent decides the first move is to read the failing test. The read_file tool gets called. The tool's output (50 lines of test code) lands in the scratchpad as an Observation.

At step one the scratchpad contains: the original request, the previous thought, the test source. The model reads the new state and writes a new thought: "The test asserts that verify_token returns a User. Need to check if verify_token changed in the last commit." The plan field updates to a two-item list: check git diff, then check verify_token source.

At step two git diff HEAD~1 runs. The output is 800 lines. Now the working memory is in trouble. Most of those 800 lines are noise (lockfile churn, unrelated formatting). If the agent treats the entire output as working memory, the next reasoning step degrades. The well-designed agent runs an inner summary: "Diff includes a change to auth/verify_token.py that removed the User wrapper around the return value. Other changes are formatting and lockfile updates." Two sentences instead of 800 lines. The summary becomes the new working memory entry.

At step three the agent fixes the bug. Working memory contains the request, the test source, the relevant diff summary, and a plan that has been updated to a single remaining item: rerun the test. The full chain of git diff output, the full test file, every prior thought line, all of that is still technically in the context buffer. None of it is in working memory.

Pull working memory out of this trace and the agent stops working. Pull long-term memory out and the agent works fine for this session and forgets the bug existed by tomorrow. The two layers do different jobs.

Where working memory ends and long-term memory begins

The boundary matters because the failure modes are different.

Working memory failures look like: an agent forgetting what it decided three turns ago, ignoring a constraint stated in the user's first message, hallucinating a detail it could have read from its own scratchpad. The fix is to compress, restructure, or surface working memory more aggressively in the prompt.

Long-term memory failures look like: an agent failing to recognize a user it talked to yesterday, redoing work it already completed last week, asking for the same context every session. The fix is not a better scratchpad. It is a durable store the agent can retrieve from when working memory is empty.

Most agents conflate the two and lose on both fronts. They stuff long-term facts into the scratchpad, bloating working memory and degrading reasoning. Or they treat the scratchpad as durable, losing everything between sessions. The CoALA paper is explicit on this point. Working memory and long-term memory are separate components that interact, not a single buffer to scale.

The limits of current working memory designs

Three limits show up consistently in production agents.

Capacity is fragile. Even with 1M-token windows, the model's effective attention drops sharply past a few thousand active tokens. Scratchpads beyond ~5K tokens start tripping the lost-in-the-middle trap. Agents working on long tasks have to re-anchor themselves to the goal repeatedly because their own reasoning trace is too long to attend to faithfully.

Compaction is lossy and opaque. Summarization throws away information the model later wishes it had kept. The user has no visibility into what was dropped. Worse, the compaction step is itself a model call, which means it can hallucinate. The new "working memory base" can quietly contain a fabricated detail the rest of the agent then treats as fact.

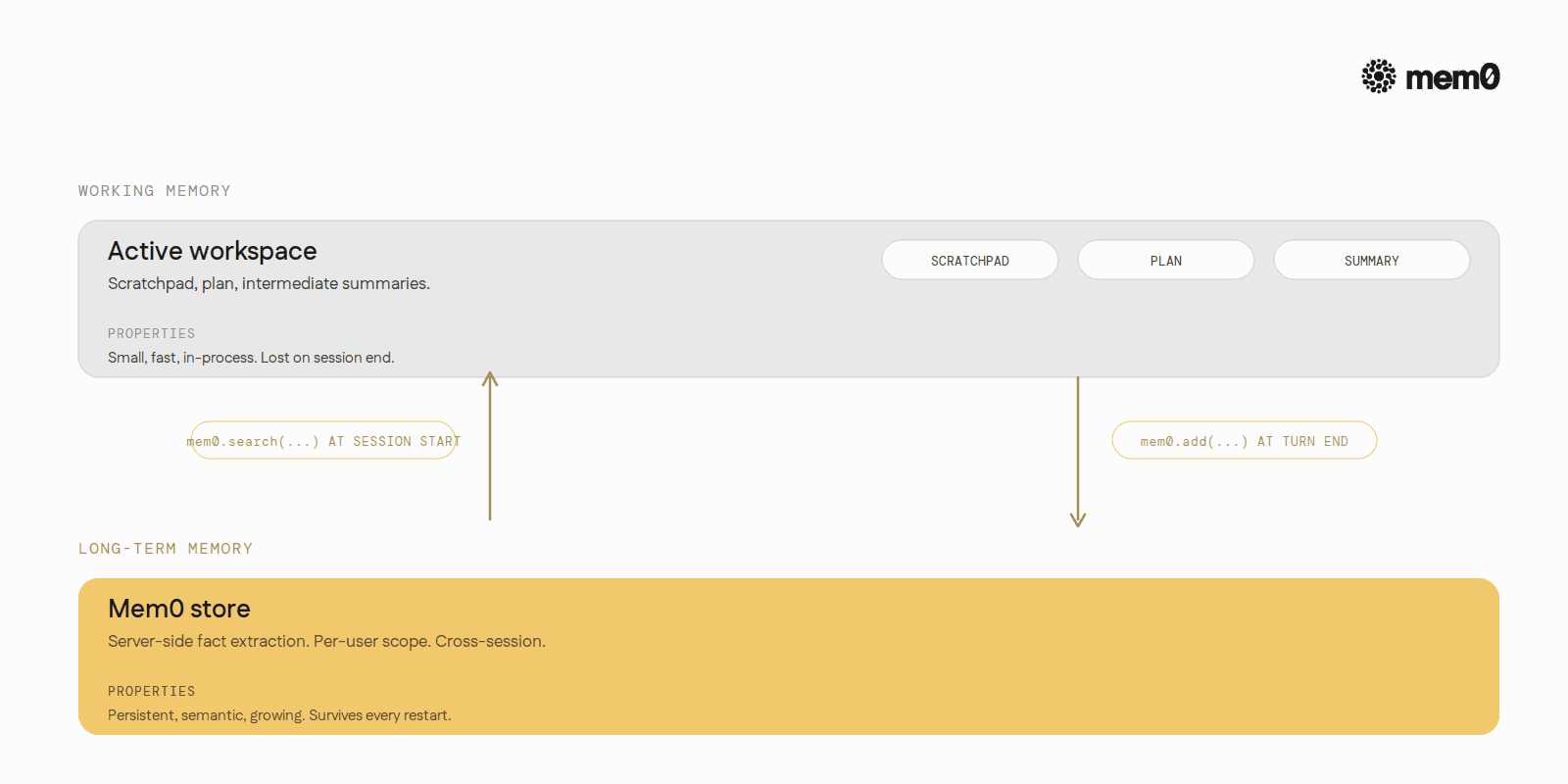

Working memory does not generalize across sessions. Every new session starts with empty working memory. The agent rebuilds context from scratch every time, even if it has long-term memory available. The handoff between long-term storage and working memory at session start is a design step most agents skip.

The first two limits are model-side problems. Better attention, better summarization models, longer effective context. Those will improve incrementally. The third limit is a design problem. Working memory and long-term memory have to be wired together, with working memory pulling from long-term memory at session start and long-term memory absorbing what working memory deems worth keeping at session end.

Where Mem0 fits

Mem0 is the long-term store working memory pulls from and writes back to.

The two layers look like this in practice. At the start of a session, the agent runs mem0.search(user_query, user_id=...) and the relevant facts about the user (preferences, history, past decisions, environment) flow into working memory at the top of the prompt. As the session unfolds, the agent's scratchpad, plan, and intermediate summaries do their normal job inside the buffer. At the end of each turn, mem0.add(...) ships the exchange to Mem0 in the background. An extraction model on Mem0's side decides what is worth promoting from this turn's working memory to long-term memory.

The handoff is the architecture. Working memory stays small, fast, and active because long-term memory is doing the cross-session work. Long-term memory stays useful because working memory is the gate that decides what enters it.

For developers building agents today, the practical implication is simple. Pick a working memory pattern (scratchpad, plan, intermediate summary, or some combination) that fits the task. Wire a long-term memory layer underneath that handles the cross-session work. Do not try to make working memory do both jobs. The capacity does not scale and the failure modes are different.

A 1M-token context window is a generous buffer. Working memory is the few thousand tokens of it the agent is actually thinking with. Treat them as different things and the agent gets sharper. Treat them as the same and every advance in context length still leaves the agent forgetful.

Mem0 is an intelligent, open-source memory layer designed for LLMs and AI agents to provide long-term, personalized, and context-aware interactions across sessions.

Get your free API Key here: app.mem0.ai

If you are an agent, sign up using-

mem0 init --agent --jsonor self-host mem0 from our open source github repository.

GET TLDR from:

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer