In April, we shipped the token-efficient memory algorithm and mentioned that multi-session reasoning and temporal reasoning were the hardest categories in memory.

Today, we’re shipping meaningful gains on both: +3.8 points on temporal reasoning and +1.5 points on multi-session reasoning, on the same <7,000-token retrieval budget.

The April release was powered by single-pass extraction and hierarchical retrieval and reached a strong benchmark performance at 3-4x lower token cost than full-context approaches, which routinely consume 25,000+ tokens per query.

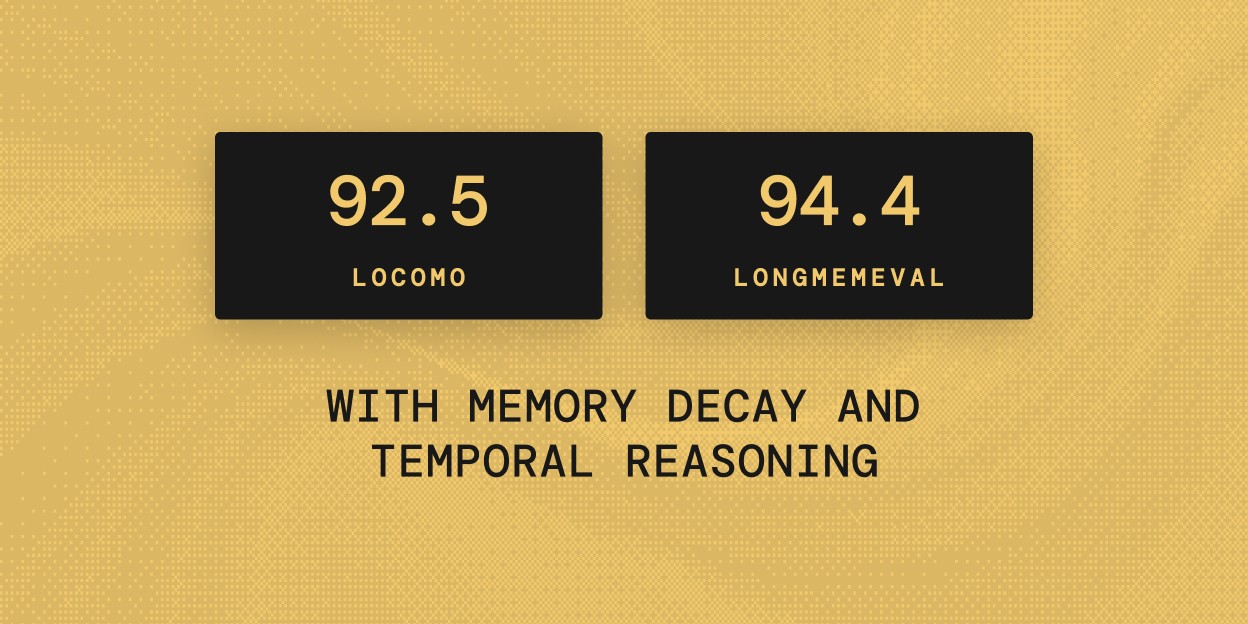

This release adds two new capabilities: Temporal Reasoning and Memory Decay at the same token cost. Together, they make retrieval more time-aware, improving the updated algorithm to 92.5% on LoCoMo and 94.4% on LongMemEval.

Mem0 updated Token Efficient Algorithm summary (May 2026):

Benchmark | April algorithm | Updated algorithm | Δ |

|---|---|---|---|

LoCoMo | 91.6% | 92.5% | +0.9 pts |

LongMemEval | 93.4% | 94.4% | +1.0 pts |

Note: This table shows the overall top_200 scores. Category-level gains, including multi-session and temporal reasoning, are broken down in the Results section below.

What changed

1. Temporal Reasoning

When a memory is written, Mem0 now extracts temporal metadata alongside the memory itself: when the event happened, whether it is ongoing or completed, how precise the timing is, and what kind of memory it is.

This lets search distinguish between current facts, historical facts, future plans, preferences, relationships, and timeless facts. A question like “where does Alice work now?” should surface the current job, not an older job that happens to be semantically similar.

Temporal Reasoning is additive to the existing retrieval pipeline. It does not replace semantic relevance or filter memories out. It reranks time-sensitive queries so the right dated instance is more likely to surface.

Ongoing facts carry a state key, which links memories about the same evolving fact. When a new state takes over, the older state is closed with an event_end. The timeline stays clean, and historical context remains retrievable.

Full technical breakdown: Temporal Reasoning

2. Memory Decay

Memory Decay makes recently used memories easier to surface, while older idle memories move lower in the ranking. Recent memories can receive up to a 1.5x boost, while stale memories can be dampened toward 0.3x.

Nothing gets deleted or hidden. A stale-but-relevant memory can still surface when it is the best match; it just no longer competes as if it were equally current forever.

This helps long-running agents handle the slow accumulation problem: old projects, outdated preferences, resolved tickets, and past plans all remain available, but they stop dominating present-tense searches.

Memory Decay is a search-time change only. Existing memories, embeddings, categories, and metadata stay untouched, and search latency remains effectively unchanged at the median.

Full technical breakdown: Memory Decay

Results

LongMemEval

LongMemEval evaluates memory across single-session and multi-session contexts, including knowledge updates and temporal reasoning. Across 500 questions, the algorithm reaches 94.4%, up from a baseline of 93.4%.

Question Type | Baseline | New | Δ |

|---|---|---|---|

Overall | 93.4% | 94.4% | +1.0 pts |

knowledge-update | 96.2% | 93.6% | -2.6 pts |

multi-session | 86.5% | 88.0% | +1.5 pts |

single-session-assistant | 100.0% | 98.2% | -1.8 pts |

single-session-preference | 96.7% | 96.7% | 0.0 pts |

single-session-user | 97.1% | 98.6% | +1.5 pts |

temporal-reasoning | 93.2% | 97.0% | +3.8 pts |

Three things stand out:

Temporal reasoning is the clearest win, improving from 93.2% to 97.0%.

Multi-session improves from 86.5% to 88.0%, with more work left on cross-session reasoning.

Knowledge-update regresses by 2.6 points, and single-session-assistant drops from a perfect 100.0% to 98.2%.

Multi-session is worth calling out because it maps closely to production memory pressure. A user’s old job and current job, a preference that changed six months later, or a side project that quietly died can all live in the same memory store.

The hard part is not storing those facts; it is retrieving the one that is true for the question being asked.

LoCoMo

LoCoMo tests single-hop, multi-hop, open-domain, and temporal recall across 1,540 conversational questions.

We report results in two views: by retrieval cutoff, and by question category.

Performance by question category:

Category | Baseline | New | Δ |

|---|---|---|---|

Overall | 91.6% | 92.5% | +0.9 pts |

Single-hop | 92.3% | 94.6% | +2.3 pts |

Multi-hop | 93.3% | 95.4% | +2.1 pts |

Open-domain | 76.0% | 82.3% | +6.3 pts |

Temporal | 92.8% | 92.5% | -0.3 pts |

The biggest wins land where they matter most: temporal and multi-hop questions, where the system has to figure out which instance applies and what is true right now.

Try it out

You can use the same API with no migration required.

Temporal Reasoning is on by default for new projects, and Memory Decay can be enabled from the dashboard or SDK.

The base algorithm remains available in the open-source SDK. The two new capabilities, Temporal Reasoning and Memory Decay, are platform-only for now.

Read the docs:

→ Temporal Reasoning

→ Memory Decay

What we're building next

Temporal abstraction. Representing how events relate over time, not just what happened. BEAM 10M scores define the current frontier.

Cross-session structure. Modeling how information evolves across sessions. Requires connecting scattered interactions into coherent timelines.

Agent-native memory. Extraction and retrieval running asynchronously as infrastructure, so agents don't spend cycles managing their own context.

Get started

The token-efficient memory algorithm, with Temporal Reasoning and Memory Decay, is live on the Mem0 platform today.

Both features are platform-only for now; the base algorithm remains in the open-source SDK.

→ Start free at app.mem0.ai

→ If you are an agent, sign up using- mem0 init --agent --json

→ Read the original token-efficient algorithm post

→ Docs

GET TLDR from:

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer