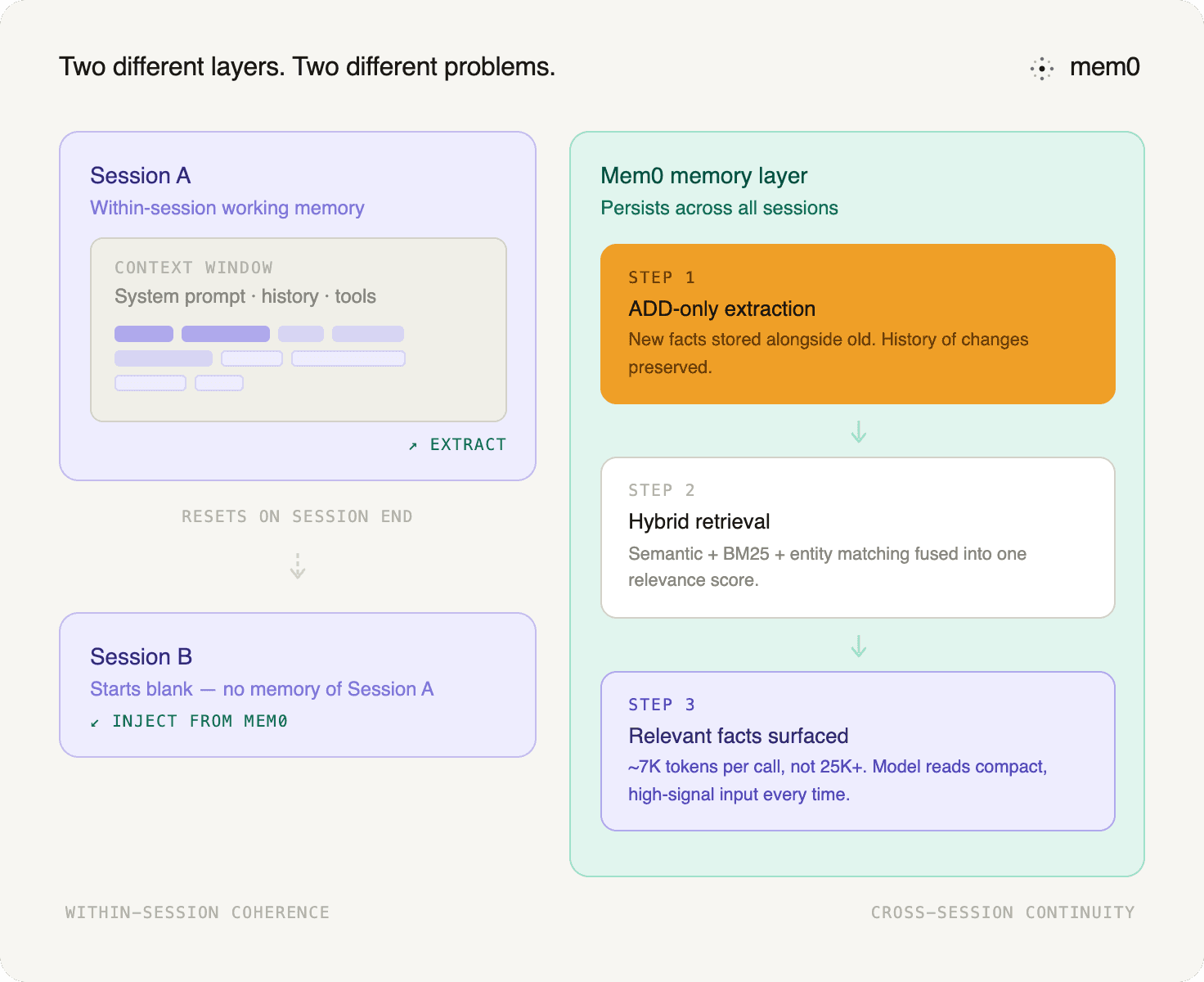

TL;DR: A large context window handles within-session coherence. Persistent memory handles everything else: continuity across sessions, cost control at scale, and reliable retrieval of the facts that actually matter. The two are not alternatives. They are different layers of the same architecture.

When GPT-4 shipped with an 8K context window, the obvious complaint was that it was too small. So the industry made it bigger. Then bigger again. 128K tokens. 200K. 1M. And at each milestone, someone made the argument that memory was a solved problem, that you could simply fit everything into the prompt.

That argument keeps not working out.

Not because the numbers are wrong - 128K tokens is roughly 300 pages of text, which genuinely is a lot. It keeps not working out because a context window and persistent memory are solving two different problems entirely. Making one bigger does not make the other less necessary.

This piece breaks down exactly why, with real benchmark numbers and a precise look at what goes wrong when you try to use a context window as a substitute for actual memory.

What a Context Window Actually Is

A context window is the total amount of text an LLM can process in a single inference call. Everything the model "sees" during a conversation - the system prompt, conversation history, retrieved documents, tool outputs, the current message - has to fit within this limit. Tokens beyond the limit are cut off. The model cannot read them.

Think of it as working memory. The model holds everything in the context window in mind simultaneously while generating a response. Within a single session, this works well. The model can reason over everything you give it, refer back to earlier parts of the conversation, and stay coherent across a long exchange.

The problem is not what happens within a session. It is what happens between sessions, and what happens to quality and cost as contexts get longer.

Five Ways Large Context Windows Fail in Practice

1. The context resets every session

This is the most fundamental limitation, and no amount of context length addresses it. At the end of a session, the context window is gone. Start a new conversation, and the model has no idea who you are, what you discussed, or what it learned. Every session starts from scratch.

A 128K context window gives you a lot of space to work with during a single conversation. It gives you nothing across conversations. A user who interacted with your agent 50 times has no persistent presence in the system unless you have built something to store and retrieve that history.

This is what Mem0 means when it describes the stateless agent problem: agents without persistent memory cannot learn, cannot personalize, and cannot maintain continuity over time, regardless of how large their working context is.

2. Cost scales with every token at every call

Attention mechanisms in transformer models scale roughly quadratically with sequence length. In practical terms, doubling your context size more than doubles the compute cost per inference call. Every additional token you stuff into the context makes every call more expensive.

A customer support agent handling 10,000 conversations a day with a 128K context window filled with conversation history is burning tokens at a rate that bears no resemblance to what the same agent would cost with a properly designed memory layer that surfaces only the 5-10 most relevant facts per query.

Mem0's latest research quantifies this directly. Full-context approaches on the same benchmarks can consume 25,000+ tokens per query. Mem0's latest memory algorithm stays under 7,000 tokens per retrieval call, with LoCoMo averaging 6,956 tokens/query.

That is the practical difference between dumping everything into the prompt and retrieving only the memory that matters. Large context windows make the prompt bigger. A memory layer makes the prompt more selective.

3. Models lose things in the middle

Research from Stanford NLP on how language models use long contexts found a consistent and troubling pattern: model performance degrades significantly when the relevant information is placed in the middle of a long input, rather than at the beginning or end. The study, published as "Lost in the Middle: How Language Models Use Long Contexts", showed that retrieval accuracy dropped substantially as context length grew and as the relevant passage moved away from the edges.

This means a 128K context window is not 128K tokens of uniform, reliable attention. It is a document where the edges get read carefully and the middle gets skimmed. If the user preference that would make the difference in your agent's response happens to sit 60,000 tokens back in the conversation history, there is a real chance the model misses it.

A memory system that extracts and surfaces the most relevant facts as a short, ranked list at inference time does not have this problem. The model always reads a compact, high-signal input rather than a sprawling document it will partially ignore.

4. Flat token weighting treats everything as equally important

A context window gives every token roughly equal treatment during the attention computation. The user's offhand comment from three hours ago sits at the same priority level as their current question. A 500-word explanation of how a function works competes for attention with the single line that says "I prefer tabs over spaces."

Memory systems are inherently selective. They extract what matters, discard what does not, and rank retrieved facts by relevance to the current query. As Mem0's published research describes it: context windows are flat and linear, treating all tokens equally with no sense of priority. Memory is hierarchical and structured, prioritizing the details that actually shape the response.

The difference shows up in response quality. A model working from a compact, curated memory retrieval generates better-targeted responses than one sifting through a dense wall of conversation tokens where the signal is buried in the noise.

5. Proximity bias skews what the model acts on

Even within a well-filled context window, models show a bias toward tokens that appear nearest to the current query - recent messages disproportionately influence the response relative to earlier, potentially more important content. This recency bias means that a critical piece of context from early in a long conversation may effectively be overridden by something trivial said more recently.

A properly structured memory system retrieves based on semantic relevance to the current query, not on where something appeared in the conversation timeline. The fact that the user mentioned three months ago that they are building for a HIPAA-compliant environment surfaced when the current question touches on data handling, regardless of how much has been said since.

The Accuracy-Cost Paradox

The old argument for full-context retrieval was simple: if you pass everything into the prompt, the model has access to everything.

That works in theory. It breaks down in production.

Full-context approaches routinely push prompts above 25,000 tokens per query. That raises cost, increases latency, and still does not guarantee the model will use the right part of the context.

Mem0's latest research results show a different trade-off. On LoCoMo, Mem0 reports 92.5 while averaging under 7,000 tokens/query. On LongMemEval, it reports 94.4 with 6,787 tokens/query. On BEAM, it reports 62.0 at 1M scale and 45.0 at 10M scale, while staying around 6.7K–6.9K tokens/query.

The lesson is not just that memory can be cheaper than full-context prompting. It is that selective memory can now be highly accurate while using a much smaller prompt budget.

That changes the production argument. The question is no longer "can a huge context window fit the data?" The question is, "Why pay to send everything when a memory layer can retrieve the few facts that matter?"

Benchmark | Score | Avg tokens/query |

|---|---|---|

LoCoMo | 92.5 | 6,956 |

LongMemEval | 94.4 | 6,787 |

BEAM 1M | 64.1 | 6,710 |

BEAM 10M | 48.6 | 6,910 |

What Persistent Memory Actually Does Differently

The distinction is not just architectural. It changes what the agent can do.

A context window gives you coherence within a session. Persistent memory gives you continuity across sessions. These are not the same thing, and you cannot get the second one by scaling the first.

With persistent memory, a user's preferences, context, and history survive session boundaries. The agent that helped them debug a Python script last Tuesday knows - without being told - that they are still working on the same project when they come back on Friday. A customer support agent does not ask the user to re-explain a problem they reported three weeks ago. A coding copilot does not suggest tabs to a user who has said twice that they prefer spaces.

None of that is possible with a context window alone, regardless of how big it is.

Mem0's current memory pipeline uses single-pass ADD-only extraction. New facts are extracted and added without overwriting or deleting older facts during extraction. When information changes, the new fact is stored alongside the old one.

Retrieval then decides what is relevant for the current query. The latest retrieval stack combines semantic similarity, BM25 keyword matching, and entity matching, then fuses those signals into a final score.

This matters because user memory is not just a list of facts. It is a history of changes. A user can move cities, switch projects, update preferences, or revise a decision. A good memory layer should preserve that evolution instead of flattening it into the latest summary.

In the current architecture, external graph memory has been replaced by built-in entity linking. Entities are extracted from memories, stored in a parallel entity collection, and used to boost relevant results at search time.

The current distinction is not Mem0 versus Mem0g. It is flat retrieval versus selective memory with hybrid search and entity-aware ranking.

You can read more about how this extraction process differs from history summarization in the LLM chat history summarization guide, and how the memory types map to different scopes in the short-term vs. long-term memory breakdown.

Context Windows and Memory Are Not Competing

It is worth being precise here because the framing of "context window vs. memory" can imply they are alternatives. They are not. They are different tools for different parts of the problem.

Context windows handle within-session coherence. The model needs a working context to reason over the current conversation, retrieved memories, and available tools. That is the context window doing its job.

Persistent memory handles across-session continuity. What the user told you last month, what preferences they have expressed, what problems are ongoing - this is what a memory layer handles.

The right architecture uses both. A well-sized context window for active reasoning, populated with relevant facts surfaced by a memory retrieval system rather than stuffed with raw history. This is how you get coherence and continuity without the cost and latency penalty of full-context approaches.

The context engineering guide covers how to think about what goes into the context window and when, which is a useful complement to understanding what belongs in memory instead.

What This Means for Teams Building Agents

If you are relying on a large context window to handle everything memory-related, you are paying for a lot of tokens that are either wasted, unattended to by the model, or wiped clean at the end of every session.

Large context windows delay forgetting. They do not create persistent memory.

The right architecture uses both layers. The context window remains the model's working memory for the current request. Persistent memory stores durable facts, preferences, timelines, and entity relationships across sessions.

Mem0's latest results make the production case clearer: selective memory can reach strong benchmark accuracy while keeping prompt size under 7,000 tokens per retrieval call. That is the difference between remembering everything by carrying the whole transcript and remembering well by retrieving what matters.

If your agent needs to live longer than one session, memory is not optional. It is part of the core system design.

The AI agent memory guide is a good starting point if you are still working out what kind of information belongs in long-term storage versus session context. And the long-term memory guide covers how to implement persistent memory in practice without building the extraction and deduplication pipeline from scratch.

For teams already running agents in production, the context compression guide comparing Hermes and Claude shows how real systems handle context management under load. The memory decay and recency-aware ranking guide covers what happens when long-running agents accumulate stale memories and how retrieval ranking compensates.

Frequently Asked Questions

What is the difference between a context window and persistent memory?

A context window is the model's working memory for a single inference call: everything in it is gone when the session ends. Persistent memory is an external store that survives session boundaries, allowing agents to recall facts, preferences, and history across conversations.

Can a large context window replace persistent memory?

No. A 1M token context window still resets at the end of every session. It also costs more per call as it grows, and models don't attend to the middle of long contexts reliably. Persistent memory solves a structurally different problem.

How many tokens does Mem0 use per retrieval call?

Mem0's latest memory algorithm averages under 7,000 tokens per retrieval call across benchmarks: 6,956 on LoCoMo, 6,787 on LongMemEval, and 6.7K – 6.9K on BEAM. Full-context approaches on the same benchmarks consume 25,000+ tokens per query.

What is ADD-only extraction?

Instead of overwriting or deleting old facts when new information arrives, ADD-only extraction stores each new fact as a separate record alongside the old one. Retrieval then decides what is relevant. This preserves the history of how a user's context has changed over time.

What benchmarks does Mem0 use to evaluate memory performance?

Mem0 evaluates against LoCoMo (long-term conversational memory), LongMemEval (single- and multi-session recall), and BEAM (1M and 10M token scale). BEAM is the most production-relevant because it cannot be solved by simply expanding the context window.

Useful sources

Mem0 Research: benchmark results across LoCoMo, LongMemEval, and BEAM

Token-Efficient Memory Algorithm: architecture details: ADD-only extraction, hybrid retrieval, entity linking

OSS v3 Migration Docs: v2 to v3 migration guide

Lost in the Middle: Stanford NLP research on long-context attention degradation

GET TLDR from:

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer

Summarize

Website/Footer